If is an eigenvector of with eigenvalue ("a -eigenvector") then so is for any .

34. Eigenspaces

34. Eigenspaces

In all the examples we've seen so far, the eigenvectors have all had a free variable in them. For example, in the last video, we found the eigenvectors for the matrix to be:

-

for , ,

-

for , ,

-

for ,

For the matrix we found the eigenvalue had eigenvectors . All of these have the free parameter .

This is a general fact:

.

So for example, the vectors are all just rescalings of . Indeed, people often say things like "the eigenvector is ", when they mean "the eigenvectors are all the rescalings of ". If you write this kind of thing in your answers, that's fine.

Suppose we have the matrix . The characteristic polynomial is , so is the only eigenvalue. Any vector satisfies , so any vector is a -eigenvector. This has two free parameters, so it is an eigenplane, not just an eigenline: there is a whole plane of eigenvectors for the same eigenvalue.

The set of eigenvectors with eigenvalue form a (complex) subspace of (i.e. closed under complex rescalings and under addition).

Let be the set of -eigenvectors of . If then (as we saw above). If then so is also a -eigenvector.

We call this subspace the -eigenspace. In all the examples we saw earlier (except ), the eigenspaces were 1-dimensional eigenlines (one free variable). So a matrix gives you a collection of preferred directions or subspaces (its eigenspaces), which tell you something about the matrix (e.g. if it's a rotation matrix, its axis will be one of these subspaces).

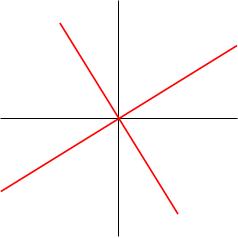

For the example we found the eigenvalues and eigenvectors . We now draw these two eigenlines (in red).

Note that these eigenlines look orthogonal; indeed, you can check that they are! You do this by taking the dot product of the eigenvectors (it's zero). This is true more generally for symmetric matrices (i.e. matrices such that ).