The image of a linear map is the set of vectors such that for some . It is written as .

40. Images

40. Images

If you think of applying a map as "following light rays" (like in some earlier examples), you can think of the image as the shadow your map casts.

If the map is the vertical projection then the image of is the -plane. That is

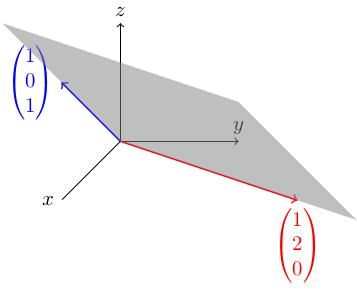

Consider the 3-by-2 matrix . The image of the corresponding linear map is the set of all vectors of the form We studied this example earlier and even drew a picture of its image: it is the grey plane in the figure below. (There's a slight "videographic typo" (i.e. "mistake") in the video, see if you can spot it).

Remarks

-

because .

-

If is invertible then . This is because if then , so .

Image is a subspace

The image of is a subspace.

If then so is . To see this, observe that if then and for some . This means that (since is linear), so .

Similarly, (since is linear), so .

Relation with simultaneous equations

has a solution if and only if where .

This is a tautology from the definition of image! has a solution if and only if there is a such that .

So putting this together with the last lecture, we see that has a solution if and only if and, if it has a solution, then the space of solutions is a translate of .

Rank

The rank of a linear map/matrix is the dimension of its image.

(Rank-nullity theorem) If is an -by- matrix (or is a linear map) then Here is the number of columns or (or the dimension of the target of ).

The 3-by-3 matrix sends everything to zero, so its image is a single point, which has dimension zero, so . The kernel is the set of things which map to zero, and since everything maps to zero the kernel is . Therefore the nullity (dimension of the kernel) is three. Note that and ( is a 3-by-3 matrix) so the rank-nullity theorem holds.

The 3-by-3 matrix sends to , so its image is the -axis. Therefore the rank (dimension of the image) is 1. The nullity is the number of free variables ( is in reduced echelon form already) which is 2 (there is one leading entry). Again, , which is good. We can see that as the rank increases, the nullity goes down (as required by the rank-nullity theorem).

The matrix is the vertical projection to the -plane, so its rank is 2 (image is the -plane). Its nullity is 1 (one free variable). Again, .

The 3-by-3 identity matrix has rank 3 (for any we have so every vector is in the image) and the nullity is 0 (only the origin maps to the origin). Again, .

The rank-nullity theorem is basically saying that the map is taking , crushing down some of the dimensions (those in the kernel), and mapping the rest faithfully onto the image (so the dimensions of either contribute to the kernel or to the image).

Proof of rank-nullity theorem

The nullity of is the number of free variables of when you put it into reduced echelon form. If we can show that the rank is the number of dependent variables then we're done: there are variables which are either free (contributing to kernel) or dependent (contributing to rank). Recall that the dependent variables correspond to the columns with leading entries in reduced echelon form.

So we need to show that the rank is the number of leading entries of in reduced echelon form.

First step: we prove that the rank doesn't change when we do a row operation. Suppose we start with a matrix , do a row operation to get a matrix . We know there is an elementary matrix such that . This tells us immediately that and have the same dimension: gives us an "isomorphism" (invertible linear map) from the image of to the image of .

As the rank doesn't change under row operations, we may assume without loss of generality that is in reduced echelon form.

Second step: if is in reduced echelon form then it has nonzero rows (for some ) followed by zero rows. Now:

-

The number is the number of leading entries (because each nonzero row has a leading entry and each zero row doesn't).

-

Recall that has a solution if and only if : these are the necessary and sufficient conditions for solving the simultaneous equations. If has a zero row then has to have a zero in that row, and if all these higher s are zero then the other rows of just give us equations which determine the dependent variables.

Since the image of is the set of for which has a solution, this means that is the set of for which , i.e. those of the form . This is a -dimensional space, so we see that the rank equals , the number of leading entries.

This completes the proof of the rank-nullity theorem.