is a linear map if

-

for all

-

for all and all .

Recall the following definitions:

is a linear map if

for all

for all and all .

is a linear subspace if

implies

implies for all .

These two definitions are very similar. We will exploit this in the next two videos: given a linear map , we will associate to it two subspaces (the kernel of ) and (the image of ).

The kernel of is the set of such that . (If then this is just the 0-eigenspace of ).

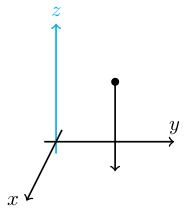

Let be the map for . Note that . This is the vertical projection to the -plane.

The kernel of is the -axis (blue in the figure; these are the points which project vertically down to the origin). That is .

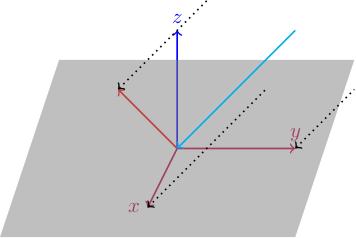

Recall the example (going from to ) from Video 3. This projects vectors into the plane; if we think of as the -plane then we can visualise this map as the projection of vectors in the -direction until they live in the -plane.

We described this as projecting light rays in the direction. In this case, the kernel of is precisely the light ray which hits the origin, which is the line (light blue in the picture).

The kernel is a subspace.

Given , we need to show that . Since , we know that . Therefore (since is linear) , so . Similarly, .

for any linear map because .

If is invertible then : if then .

The "kernel" in a nut is the little bit in the middle that's left when you strip away the husk. If then we can think of as the space of solutions to the simultaneous equations , which is the intersection of the hyperplanes In other words, it's the little bit left over when you've intersected all these hyperplanes.

Consider the simultaneous equations ( is an -by- matrix and ). Let . The space of solutions to , if nonempty, is an affine translate of .

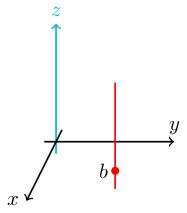

If (so is vertical projection to the -plane) then has a solution only if is in the -plane, and in that case it has a whole vertical line of solutions sitting above .

This vertical line of solutions is parallel to the kernel of (the -axis), i.e. it is a translate of the kernel.

We saw this lemma earlier in a different guise in Video 19. Namely, we saw that if is a solution to then the set of all solutions is the affine subspace where is the space of solutions to . In other words, .

In particular, we see that if has a solution then it has a -dimensional space of solutions, where is the dimension of .

Remember that the space of solutions has dimension equal to the number of free variables when we put into reduced echelon form. For example, is in reduced echelon form with two leading entries and one free variable, which is why we get 1-dimensional solution spaces.

The nullity of (or of ) is the dimension of (i.e. the number of free variables of when put into reduced echelon form).

Our goal for the next video is to prove the rank-nullity theorem which gives us a nice formula relating the nullity to another important number called the rank.