A sequence of matrices converges to a matrix if, for all , there exists an such that whenever .

Optional: Matrix norms

Matrix norms

We defined , , and claimed that it converges. What does it mean for a sequence of matrices to converge?

Here, denotes a matrix norm, i.e. a way of measuring how "big" a matrix is.

A

-

(triangle inequality) for all ;

-

for all and ;

-

if and only if .

We will focus on two particular matrix norms.

L1 norm

The -norm of , is . So a matrix is "big in the norm" if it has an entry with large absolute value.

If then for all , so . If we rescale by then all entries are scaled by , so . The triangle inequality can be deduced by applying the triangle inequality for absolute values to each matrix entry.

Operator norm

The

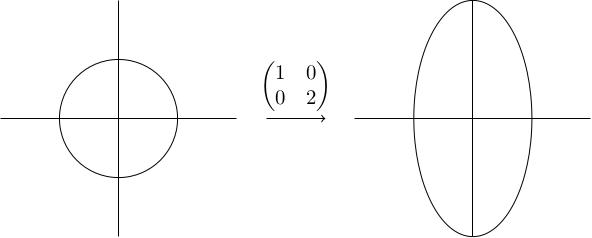

Another way to think of this is: you take the unit sphere in , you apply to obtain an ellipsoid, and you take the furthest distance of a point on this ellipsoid from the origin.

Take . The -axis is fixed and the -axis is rescaled by a factor of 2. The unit circle therefore becomes an ellipse with height 2 and width 1. The furthest point from the origin is a distance 2 from the origin (either the north or south pole), so .

To see that the operator norm is a norm, note that:

-

If then all points on the ellipsoid image of the unit sphere are a distance 0 from the origin, so the image of the unit sphere is the origin. Therefore .

-

If you rescale by then the lengths of the vectors over which we are taking the maximum are rescaled by , so .

-

To prove the triangle inequality, note that . Since , this shows .

Lipschitz equivalence

Any two matrix norms on are Lipschitz equivalent. For the two norms we've met so far, this means there exist constants , , , (independent of ) such that: and

These inequalities will be useful in the proof of convergence: sometimes it's easier to bound one or other, and this is telling us that if you can bound one then you can bound the other. It also tells us that the notion of convergence we defined (where we had implicitly picked a matrix norm) doesn't depend on which norm we picked.

We won't prove the lemma, but for those who are interested, it's true more generally that any two norms on a finite-dimensional vector space are Lipschitz equivalent. This fails for infinite-dimensional vector spaces, but we're working with which is -dimensional.

Properties of the operator norm

We will now prove some useful properties of the operator norm. Since we're only focusing on this norm, we will drop the subscript and write it as .

-

For any vector , .

-

.

-

.

-

Write as for some unit vector . Then because by definition of the operator norm.

-

Let be a unit vector. We have using the first part of the lemma twice. This shows that the things we are maximising over to get are all less than , so .

-

Lastly, using the previous part of the lemma inductively.