We have This expression is the "golden ratio" .

37. Eigenapplications, 3: Dynamics

37. Eigenapplications, 3: Dynamics

We now turn to dynamics. Let be a vector and be a matrix. Consider the sequence . We'll investigate what happens to this sequence as .

Example: Fibonacci sequence

Let and . We get and, more generally, where , , , , , , is the Fibonacci sequence.

Why are we getting the Fibonacci numbers? Suppose the formula is true for some value of ; we'll prove it's true for all values of by induction: where we used the recursive formula which defines the Fibonacci sequence.

As , both entries of the vector tends to infinity, but they do so in a particular way:

Write as where and are the - and -eigenvectors of . We'll figure out what these eigenvectors and eigenvalues are later.

Now . We have , , and by induction we get Therefore .

I claim that and . Therefore:

-

, so ,

-

as . Note that is negative, so its powers keep switching sign, but its absolute value is less than 1, so the absolute value of its powers get smaller and smaller as .

Therefore is the limit of the slopes of the vectors , and the term is going to zero, so in the limit we just get the slope of the vector , which is just a rescaling of . Since rescaling doesn't change the slope, we get

We therefore need to figure out the slope of (and verify the claim about eigenvalues). The characteristic polynomial of is , whose roots are as required. The eigenvectors are , so (corresponding to the plus sign) has slope , as required.

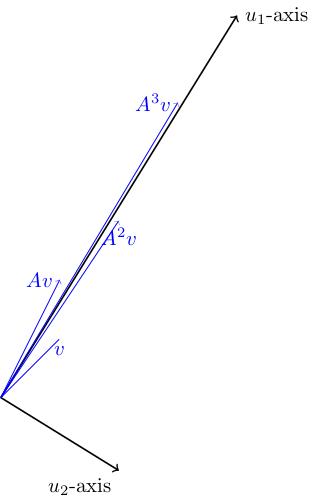

Here's a picture of the eigenlines (orthogonal to one another because the matrix is symmetric) and the positions of . You can see that these vectors get closer and closer to the -eigenline (and stretched out in the -direction). They move from side to side of this axis because the sign of is negative. So gets more and more parallel to as .

Arnold cat map

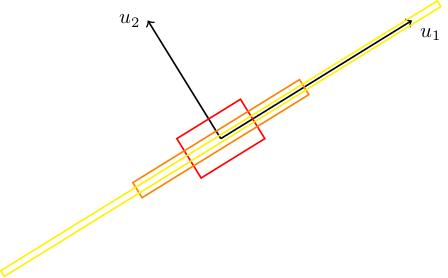

Here's another nice example, due to Vladimir Arnold. Consider . This has eigenvalues : one of these is bigger than 1, the other is positive but less than 1. Here are the eigenlines, with a square drawn on (whose sides are parallel to the eigenlines). We also draw and . We can see that it gets stretched in the -direction and squashed in the -direction (because and ). In the limit, gets thinner and thinner and closer to the -eigenline.

This is called the Arnold cat map because of the following strange phenomenon. Take an infinite grid of squares in , take a picture of a cat, and put it into every square. Apply to this grid of cats. The cats will get stretched and squashed in the eigendirections. Pick one of our original squares and look at what's there. We see a bunch of cats all chopped up and stretched and squished back into that square in some way. Now repeat, and repeat. What we see in our square is absolute carnage for a long time. But, amazingly, at some point, we our cat reappears almost identically to how it looked to begin with. This is not because of any periodicity: is not the identity for any . This is instead an instance of "Poincaré recurrence": a phenomenon in dynamical systems which goes way beyond anything we're discussing in this course.