We will change notation slightly and write where . Bundling the two integers together in this way will make life easier in future (e.g. when we have more than two integer weights).

Root vectors acting on weight spaces

Review

Given a representation , we have seen that where and or equivalently

Remember that isn't in , rather it's in , which is why we're using .

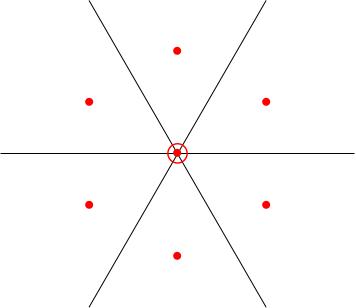

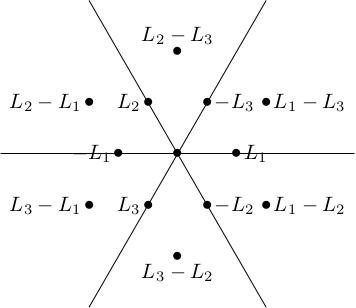

We were drawing the weights on a triangular lattice. For example, the weight diagram for the adjoint representation was:

Define , , . These are the s corresponding to respectively.

With this notation, the weights of the standard representation are and the weights of the adjoint representation are because

The analogue of X and Y

Statement

For , the adjoint representation has weight spaces , and . The elements and played an important role in studying the representations of : moved vectors from weight spaces with weight to weight spaces with weight and moved them back again.

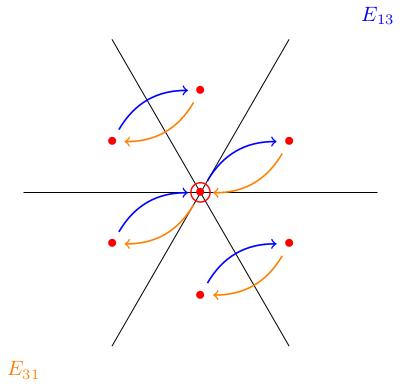

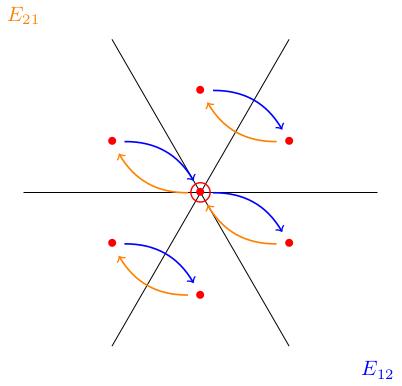

The analogue for will be to see how the weight vectors of the adjoint representation act on the weight spaces of another representation.

Given a complex representation , sends to .

Example: Adjoint representation

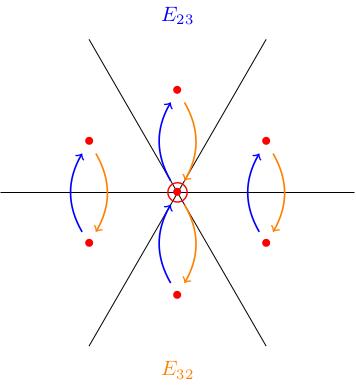

We illustrate the lemma in the figures below, showing how the matrices act in the adjoint representation. For example and translate weight spaces forwards and backwards along the direction.

Example: standard representation

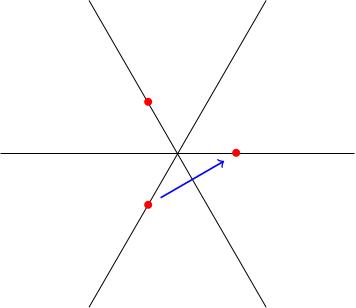

The figure below shows the standard representation. There are three weights . Let's see how acts. It sends and to zero and it sends to . Correspondingly, we draw an arrow in the -direction in the weight diagram, as dictated by the lemma.

We know that sends to by the lemma, but which is why . In terms of the figure, the vector starting at ends at a lattice point which is not in the weight diagram.

Proof of lemma

If then we need to show .

We have if and only if .

We have if and only if .

We have , because . Applying we get

Therefore

Since , we have , so This shows that as required.

We have used two crucial things:

-

is a representation

-

, in other words, .

The same proof shows more generally that if is a weight vector of the adjoint representation (root vector) with weight (root) then sends weight vectors in (for any representation) to weight vectors in .

Pre-class exercises

What do you think the weight diagram of the standard 4-dimensional representation of would look like? How do you think the matrices act?